On Artificial Intelligence

And why we're not doomed

So I know I’ve generally stopped writing but I continue to exist in the world and I do occasionally get a bee in my bonnet. Given the proliferation of AI image generation and Chat GPT iterations, it’s certainly become en vogue to talk about the possibilities of doom and despair from these tools. Leaders such as Elon Musk have said we need a moratorium on the research.1

Today I listened to the excellent EconTalk podcast with Eliezer Yudkowsky on his essay in Time regarding the dangers of AI and the need to shut it down. This conversation, rather ironically for EconTalk, doesn’t tackle economics issues at all but rather the existential dangers of AI destroying humanity. Regardless, I wanted to tackle the economics of why I’m optimistic about jobs and automation in this post, but it started to get away from me and I will publish that as a new post shortly.

So let’s get on to why AI won’t kill us all. And just as a note, I will use AI in stead of AGI (artificial general intelligence) just to be more readable, but know that I’m basically conflating the two in this post.

Knowledge Problem

The beauty of emergent order is pretty fundamental to my thinking and you can read this post of mine, to get a good idea of just why it’s so amazing. The idea of emergent order versus central planning is also fundamental to the idea of AI doomerism. The intellectual history of central planning is that if you just have enough people who are smart enough, they are able to plan and make things better than just letting naturally chaotic interactions will. This is as fundamentally false now as when the Soviet Union introduced its first five year plan in 1928.

The Soviets were equally sure that they finally had the means to get all the knowledge necessary to effectively plan what needed to be produced and where. The AI doomers effectively think the same is true, even if they wouldn’t put it in those words. Basically the idea is that an AI is capable of taking all information and using it to exploit humans to extinction.

Unfortunately, an AI is only as good as its models and the issue with central planning has never been a lack of intelligence in people making the decisions, it’s that you can never have all the inputs involved in solving any particular issue. And on top of that it’s impossible to even model all the inputs as one of the major inputs is the opportunity costs of everyone involved. Basically if you need X person (or AI or whatever) to do something, at some point in your optimization, the other party will want to go do something else. (we’ll get back to this point later). But all of these inputs are so varied that it’s just impossible to know all of them so the solution is to just optimize for a small problem set we can control.

This means AI might be great at helping a single factory know how much to produce based on the price of steel or whatever component. But it can’t optimize for everything because it just can’t know everything that will go into making the price of steel exactly what it is. Because prices are quantifiable, we think of everything around them as such, but the amount of information in any given price is astronomical and completely unquantifiable.

The iteration issue

One other thing to keep in mind is that computer chips are not neurons. They just don’t function the same way. AI works by learning patterns over massive amounts of datasets. But lets say I discover a very cute little new mammal called a snozzle. Well, I can see two pictures of snozzles and instantly know if a third one is a snozzle or a raccoon (assuming we’re not in an alligator/crocodile situation where they are, in fact, very similar). An AI has to go over millions of photos to have an idea of what pattern it is distinguishes that snozzle from any other. But it can’t imagine things outside of the training set.

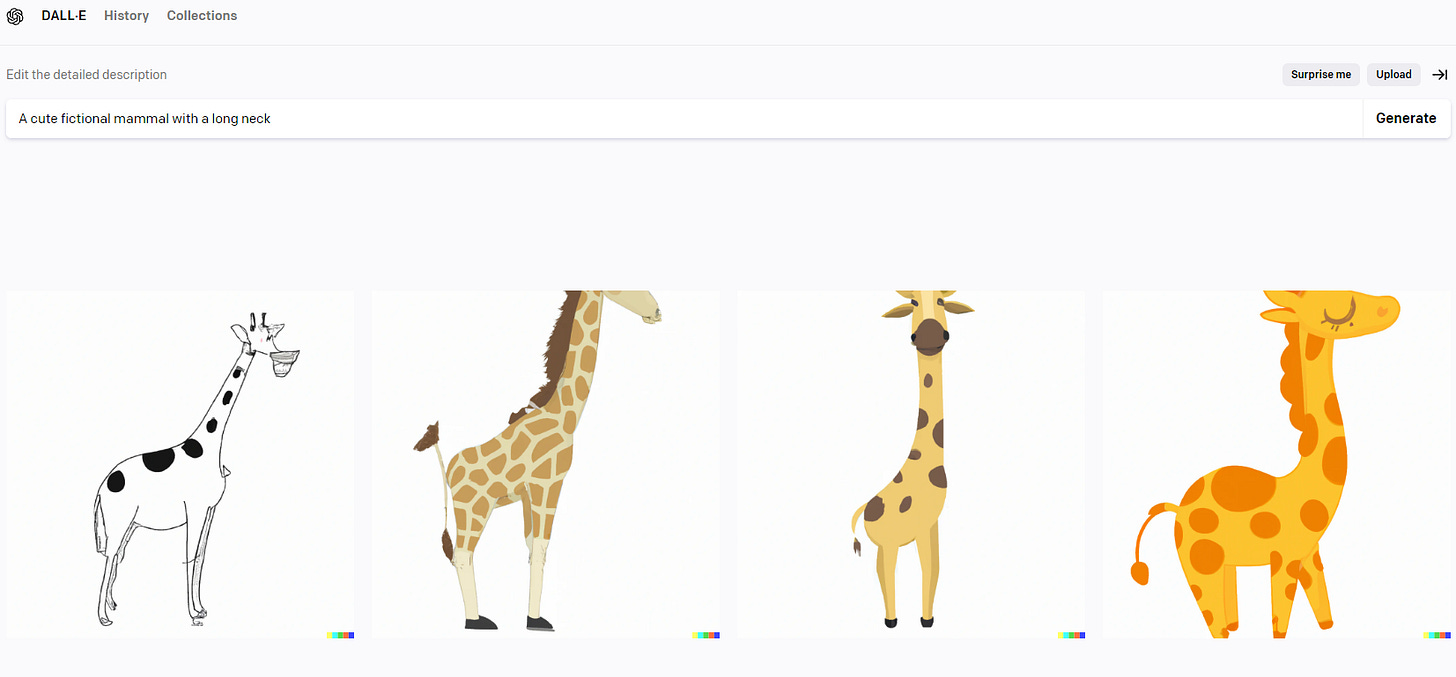

I actually tried to get an image of this invented animal generated by an AI and the results failed but illustrate my point rather perfectly. I prompted “a cute fictional mammal with a long neck” and it gave me drawings of giraffes. This is because it’s trained on animals and it can’t imagine a long-necked animal that isn’t a giraffe.

Now, where this gets really interesting is when thinking about how an AI might create simulations to train itself on. This is best illustrated by games such as chess or go where AIs are now very clearly better at humans at those games. Those cases work because there is a very constrained set of rules and the computer is able to create billions of different combinations of plays virtually and analyze games that have never been played and positions that will never exist in a real game to figure out how to play. Though it is still bound by the rules, it doesn’t consider that turning over the board and punching your opponent in the face is another way to come out on top.

But this hits a snag when dealing with real world issues where iteration requires some physical action. Because, as I detailed above in the about not knowing all the inputs, it’s simply impossible to accurately model the whole world, or doing some sort of strength test of physical materials means you can’t just do millions of virtual iterations. You can try and make a model, but that model isn’t the world with it’s complete randomness.

The big example of this is self-driving cars and why I’m skeptical. As a human, you’re able to take the sum of your knowledge and know that if you have to choose between driving through a pile of leaves or a concrete barrier, you should choose the leaves. The AI has to fail at that task several times to understand and that, frankly, is just unacceptable. That’s why I see a viable version of self-driving as getting on a limited access highway and saying what exit you want off at. Not at all a small thing, especially when thinking of things like long-distance trucking and not needing to stop and rest, but an incremental change in society rather than something completely different.

So along those lines, discovering something truly novel about the world is probably much harder for an AI than a human because of this iteration problem.

AI isn’t a single thing

So one of the classic thought experiments of why AI is dangerous is the ‘paperclip problem’. While I recommend reading the whole article, it can be summarized as:

The notion arises from a thought experiment by Nick Bostrom (2014), a philosopher at the University of Oxford. Bostrom was examining the 'control problem': how can humans control a super-intelligent AI even when the AI is orders of magnitude smarter. Bostrom's thought experiment goes like this: suppose that someone programs and switches on an AI that has the goal of producing paperclips. The AI is given the ability to learn, so that it can invent ways to achieve its goal better. As the AI is super-intelligent, if there is a way of turning something into paperclips, it will find it. It will want to secure resources for that purpose. The AI is single-minded and more ingenious than any person, so it will appropriate resources from all other activities. Soon, the world will be inundated with paperclips.

It’s an interesting and frightening thought. But it suffers from some major issues, two of which I’ve already outlined here. The first is that the paperclip factory just can’t have any idea of everything involved with making a paperclip. And on top of that, the objective of a factory isn’t to make the most of a thing, it’s to make the most money out of making that. So I also object to the idea of maximizing making paperclips. Because at some point there are so many paperclips in the world, that nobody wants to buy them so you are operating at a loss.

The second problem I already outlined is the iteration issue. Since we’re dealing with something physical, the AI can only try as fast as it’s possible to retool the factory, change manufacturing settings, etc…. All the trial and error required takes a fair amount of time to have real optimization.

But the point I have yet to see eloquently argued is that AI is always treated as a single nebulous entity, but that’s not how it will work. There will be millions of AIs each with its own optimization goal. So the paperclip factory AI wants to make as many paper clips as possible, but the steel mill AI wants to get as much money for its steel as possible. The car factory AI also needs steel for its own optimization. These aren’t competing goals as there is a point where it makes sense to sell a certain amount to the paperclips and a certain amount to the car manufacturer and a certain amount to the ship building AI and on and on and on.

This competition is entirely self regulating and abracadabra, we’ve just made a marketplace. The same markets that limit the amount of stuff you can buy and sell now are a result of complex interactions. Until now those have been exclusively human interactions, but their existence isn’t dependent on being human at all. It’s a necessary part of scarcity.

Tying it all together

So the idea of AI doomerism is fundamentally the same folly of the early progressives in the early 20th century. Which is not to say it’s not a dangerous thing to worry about at all. Those ideas were the basis of central planning and eugenics and so managed to lead to the worst parts of both the USSR and the Third Reich. We need to be very wary of anyone thinking that proscriptively. Yet by just embracing the chaos and the intellectual humility to know that it’s just impossible to have all of the knowledge necessary to centrally solve these sorts of problems, things are very likely to get much better with these tools doing what they can.

Though it should be taken with a massive lump of salt when the CEOs of OpenAI and Microsoft agree no other competitors should challenge them. It’s easy to have those points of view when you massively benefit from them.